Acoustic Learning,

Inc.

Absolute Pitch research, ear training and more

Perfect pitch requires categorical perception. I've been convinced of this for a while now. For a quick-n-dirty example of what this means, open your mouth and start saying the vowel ahhhhh. Now keep saying the vowel, but gradually close your mouth. You will hear two simultaneous but separate phenomena: you will, of course, hear the sound constantly changing as your mouth closes-- but you will also perceive that the vowel continues to be ah until suddenly it snaps into another vowel. You can open and close your mouth repeatedly (ah-oo-ah-oo) and, even though you can clearly discern that the sound is gradually sliding along a continuum, you will only ever perceive a few distinct vowels. Now compare this to your perception of musical sound. If you hear a sound sliding from one frequency to another, you can hear the slide-- but that perception of distinct categories, with that sudden snap from one to the other, is absent. Perfect pitch is not the ability to name notes. Not really. Perfect pitch is when your mind stops perceiving the pitch scale as an infinite continuum and starts perceiving it in seven discrete chunks.

It seems fair to say that there are seven pitches, rather than twelve. An octave is divided into twelve pitches, but in Miyazaki's experiments on speed of pitch perception, the "white keys" (major scale tones) were identified more quickly than any of the "black keys" (flats and sharps). This suggests to me that the magical number seven, plus or minus two, seems to apply to colors (ROYGBIV) as well as pitches (CDEFGAB). Listeners are able to hold the seven primary pitch categories in memory for immediate identification and recall; and, when presented with a pitch that is similar to one of those seven, an extra moment's thought will bring the adjacent category to mind. This appears to be similar to how we identify unusual colors-- when presented with an odd color, we immediately recognize it according to its primary category and then start making further judgments. Sky blue is just "blue" until you pay that extra moment of attention to give it the specific name. So while it will be ideal to learn all twelve pitch categories, the major seven are the critical ones.

It seems fair to say that there are seven pitches, rather than the 100-plus that are within the range of human hearing. If you recognize that pitch class and octave are two separate qualities of a musical tone-- a distinction made as early as 1913-- and combine that with the documented observation that absolute listeners are prone to making octave errors, then it seems obvious that listeners who are able to identify "100-plus" pitches are really making two separate categorical judgments: one judgment based on the seven primary pitch classes, and a second judgment based on the seven primary octaves used in musical performance. Even then, the predominance of octave errors suggests that absolute listeners don't have categorical perception of musical octaves, but make their best guess from the apparent "thinness" of the tone. So tone height is a quality that absolute listeners are aware of, but they aren't aware of it as height.

Learning perfect pitch, therefore, should be both learning to make categorical judgments among seven pitch classes, and learning to recognize tone height as a separate quality that isn't height. The second part's the stumper. As far as most of us are concerned, pitch is the height of a tone, and somehow we need to pull this apart. For the first part, though, we may actually have a road map of how to do it.

The problem is to retrain ourselves to hear a single dimension, vibratory sound frequency, as discrete categories instead of as an infinite continuum of values. When I was looking at experiments that were testing how categories were formed in adult brains, most of the experiments I found trained observers to form categories that were based on multiple dimensions, or at least categories which changed more than a single value between them. Experiments such as these demonstrated that adults are capable of forming new perceptual categories, and could do so based on near-unconscious observation of minute differences, but didn't persuade me that adult brains are capable of learning categories when there is only one dimension to be learned-- especially when we are already well familiar with that dimension as a non-categorical perception. But then I read this 1994 paper from Robert Goldstone in which observers were trained to categorize squares according to their brightness, and in doing so gained heightened perceptual sensitivity to each brightness category. The observers were only trained for about an hour, so they didn't gain actual categorical perception for these levels of brightness, but I see the takeaway message as this: after only an hour of training, the categories had started to form. The training was nothing more than observers seeing different members of each brightness category and actively labelling each one with its category name, after which they were told whether or not they were right. If only an hour of this training were enough to begin the formation of categorical perception, then you might think that a continuation of this training would be enough to complete the formation of categorical perception.

Yet merely naming pitches and getting feedback isn't enough to learn perfect pitch, no matter how long you train. We've known that since 1899. Anyone who practices naming notes will get better at it, but there's no evidence that this "skill" is anything like perfect pitch, in that it would actually begin to develop categorical perception for each of the tones. I suspect that there's a subtle and critical difference between Goldstone's procedure and what's been tried for more than a century: Goldstone's procedures did not present just one example for each category. His procedure presented multiple different examples within every category. If the same approach could work for turning pitch into a categorical perception, then the procedure will require training not with one precisely-well-tuned sample of each pitch, but with many different poorly-tuned samples of each pitch. Categorical perception might therefore be induced, not by hearing the perfectly-tuned ideal exemplar of each pitch and trying to remember it, but by actively sorting various bad examples into their appropriate categories. This, theoretically, could encourage our brains to start defining certain ranges of sound as belonging to this pitch category or that pitch category, thereby naturally developing a tendency to categorize every pitch we hear.

If sorting out-of-tune tones into their proper pitch categories could start to develop perfect pitch, the obvious question is which tones should be sorted. For this we turn to recent developments in phonetic science, and the idea of a bimodal distribution model. Traditional phonetics asserted that we learned different letter sounds by minimal pairs-- by hearing them change from one word to another. That is, if we learned what a bat was, and then discovered that a pat was not the same thing, then this knowledge would allow us to compare pat and bat and learn the difference between p and b. This is essentially the current mechanism of Absolute Pitch Avenue-- and although it does sharpen one's listening skills, it does not induce categorical perception. The bimodal distribution model presents an alternative that is closer to Rob Goldstone's procedure. The idea here is that, when we hear letter sounds in the real world, these sounds are produced by hundreds or thousands of different people, and so none of them are going to be exactly the same-- but they are going to cluster around the categorical ideal in a normal distribution. So because we most regularly hear an oo sound near its categorical ideal, our minds infer an average of all the oo sounds we hear and makes that the categorical center. As the oo sound moves away from the center, we hear it less and less regularly... and then it starts to sound like other vowels that we know are supposed to be oh. So the decreasing regularity clues us in to the change toward the oh vowel in the one direction, while the exact same process is working toward the oo in the other direction, causing us to form a categorical boundary between oo and oh. The bimodal distribution model suggests to me that presenting out-of-tune tones in a normal distribution around adjacent pitch categories could be a way to induce categorical perception of those pitch classes.

It doesn't seem likely that a child who learns perfect pitch will have done so by hearing thousands of out-of-tune pitches that they sort into categories. It seems more likely that they would be exposed to thousands of well-tuned pitches in different contexts. However, there is this still-magical phenomenon known as the "critical window" in which, from the ages of 3 to 6, children learn about language and sound differently than adults. I'm willing to chalk up the difference in learning to the critical window-- children may not need multimodal distributions to learn perfect pitch because they have no preconceptions about what pitch frequencies are all about, but adults need to train with a multimodal distribution to overcome their lifelong biases to hear pitch non-categorically.

This training process is what I'm going to realize in the next version of the Ear Training Companion: sorting distributions of tones into categories. I may have to start rebuilding ETC from scratch, if only because Realbasic is incapable of generating microtones on non-Mac computers. But even as I do, I'm going to have to seriously consider how to overcome the second part of the training: getting listeners to perceive pitch height as separate from pitch class, and to recognize it as something other than height.

I think I understand what separable pitch height is, by now, and I know I've written about it before: it's the overtone series of a given pitch. In fact, I know I've written extensively about this before, expounding about partial octaves and incremental pitch height achievable by manipulating overtones. I am sure that non-absolute listeners perceive this kind of pitch height. I recall an experiment in which listeners judged the pitch of two tuning forks; each played the same tone, in the same octave, but one was made of a thinner metal. The thinner-sounding tone was judged to be in a higher octave than the other, regardless of listeners' musical experience. I also have successfully gotten non-musicians to sing perfect octaves by asking them to sing "the same tone, but lighter (or thinner)." Learning to perceive this kind of pitch height isn't a training goal, especially if absolute listeners don't perceive it categorically anyway. No.. the problem is to persuade our minds that pitch frequencies, by themselves, are not fundamentally "higher" or "lower" than each other.

The consequence of interpreting frequency as pitch height is easy to imagine. Let's say that a person trained with multimodal distribution and (let's be optimistic here) successfully formed firm categories for each of the 12 pitches between middle C and high B. Great. Then the same person is presented with a high C. Why would this person, who has unconsciously believed all their life that pitches differ in height, place this in the same category as middle C, which is 12 whole categories distant from it? Why would they not become utterly bewildered by this out-of-bounds sound or, perhaps, place it in the same category as its neighboring B? I present these as rhetorical questions, but they must be answered. In the We Hear and Play process, new octaves are introduced by telling a child that the tones are the same pitch class, just "brighter" or "darker" in color, and a child's mind is okay with that. Adult minds won't buy that so easily. Absolute Pitch Avenue successfully teaches an adult mind to hear chroma, and the game works toward perceiving octave equivalence, but I don't know if that will be enough. I reject the idea of using unnatural tones, like Shepard tones that have no discernable height (or, looked at another way, simultaneously have every height), because I have no evidence to suggest that learning with unnatural tones would transfer to natural tones. There must be some kind of process by which a normal mind can accept octave equivalence as the greater truth. I just need to find it.

But, while I'm figuring that out, I do have a solution in hand for training categorical perception. Which means, in turn.. I've got a new game to design.

[And yes, updates to ETC will continue to be free!]

The Ear Training Companion v7 is finally ready. It teaches two types of listening known to be critical to absolute-pitch hearing-- chroma detection and categorical perception-- and now we'll find out whether this is enough.

We've known for decades that an absolute-pitch judgment is based on the "chroma" of a sound. For this reason, over the years we came to believe that the magic key to having absolute pitch was becoming able to perceive chroma. We knew that the chroma was generated by the fundamental frequency of a tone, even though we couldn't explain exactly how, or even understand exactly what "chroma" was. Experimental attempts at teaching people to hear chroma dried up in the early 1970s after nearly a century of failure, but others continued to soldier on, each trying in their own way to persuade listeners to hear tone chroma. These approaches supposed that certain listeners could hear and recognize chroma, and the rest of us couldn't, and that we therefore needed to learn to hear chroma to "have perfect pitch".

The Ear Training Companion showed that to be untrue. Using the mechanism of perceptual differentiation, the game successfully taught listeners to hear chroma. Before ETCv6, no training approach had been able to unambiguously claim that its listeners were truly perceiving chroma, because every other system had left it up to the listener to guess at what the "chroma" could be, making them uncertain whether the tonal qualities they were fixating on were in fact chroma. ETC, on the other hand, used a natural principle of human perceptual learning to make listeners isolate and perceive tone chroma. It worked. As I trained with ETC myself, I started to hear tone chroma, and I started to recognize it. I started to become able to identify certain tones-- most notably an A-flat-- and I thought the problem had been solved. Slowly, though, I realized, to my dismay, that no, it hadn't. I had become able to identify and recall a few pitches, yes, but every training system throughout history has taught its listeners to do that. What I began to notice is how often it happened that I would hear a tone, know that I was perceiving its chroma, recognize the chroma as something I had heard before, and still be completely unable to identify it. Evidently, perfect pitch was not the ability to hear and recognize chroma, as we had all assumed. Having learned to hear chroma, and having become able to recognize it, I still needed to learn how to identify it.

I began to research how we learn to identify phenomena similar to pitch. Of course, I had to decide what "similar phenomena" could be. The most obvious is color. Color, like pitch, is a judgment based on a single frequency value; and, also like pitch, that single value is not naturally perceived in isolation. In developing ETCv6, I had considered that perceptual differentiation likely played a part in learning color-- seeing a green apple and a green leaf probably helped us abstract the shared quality of green. Maybe also, I thought, it made a difference that the world around us is color-coded; there are practical reasons to learn that a stoplight is red, moldy food is blue, and so on. I seriously considered that maybe the best way to learn tones could be to make tone-object associations, where the objects could be anything: familiar melodies, or personal property, or even programs on one's computer. But after finding and reading some research on tone-object associations, it seemed that these were little better than mnemonic devices, and in that regard I had to agree with Evelyn Fletcher: when you use a mnemonic device to learn something, all you really learn is the mnemonic device. The mnemonic device interposes itself between you and the actual learning and, when it fails, you have no fundamental knowledge from which to derive the correct answer. Furthermore, it seemed implausible that our perceptual systems would learn to identify colors, or any similar phenomenon, only through associative-naming devices. There must be some way we learn them perceptually.

Gradually, categorical perception came to my attention. This seemed a promising common thread. Colors and phonemes (language sounds) are both perceived categorically, and I've known for a long time that absolute listeners perceive pitches categorically whereas we non-absolute listeners don't. Reading up on categorical perception indicated to me that it was a process of perceptual learning, not associative learning, although identifying associations are naturally made during the learning process. Categorical perception therefore seemed like the missing link: categorical perception enables people to identify similar things uniquely; it's a perceptual learning process; and it's a skill that, for pitch, only absolute listeners have. Chroma may be the basis for absolute judgment, but maybe categorical perception is how absolute listeners identify the chroma they hear.

The perceptual mechanism for learning categorical perception was not easy to come by. There is no shortage of research on categorical perception, much of it originating with Robert Goldstone, but it took me a long time to discover what I was looking for. Categorical-perception papers generally demonstrate that human beings are excellent at pattern recognition. When presented with complex abstract figures we will unconsciously intuit whatever subtle patterns exist in their shapes, allowing us to learn that this kind of squiggle is a goob and this kind of squiggle is a geep. It may not seem obvious, but if you think about it, most of the objects you encounter are complex abstract figures, and the fact that you learn to identify this group of squiggles as cat and this other group as dog is fundamentally no different from learning to identify goobs and geeps. But what I was looking for was a perceptual mechanism by which people learned simple abstract figures. When you're presented with a group of characteristics, or multiple dimensions, you can assess how all the different components relate to each other and discern patterns in them. When you have only a single characteristic, drawn from only one dimension (such as chroma, determined by vibratory sound frequency) it can't present a pattern. It just is what it is. So I was baffled as to how it might be learned.

But then I looked more closely at one of Rob Goldstone's papers. This particular study ("Influences of categorization on perceptual discrimination", 1994) actually did show the development of categorical perception for a single characteristic based on a single dimension. In this case, people learned to judge the brightness of a square-- and brightness, like color, has long been considered a sensory characteristic conceptually similar to pitch. And how did the researchers do it? By nothing more complicated than having people judge whether squares belonged in category A or category B. However, there was more than one square in each category. When we learn to categorize cats and dogs and goobs and geeps, we can figure out patterns from all the different types of information presented in each particular example. When we learn to categorize brightness, there's only one piece of information presented in each example, so we can't form any pattern from it-- but when we observe many examples over time, then we can form patterns from the information we gather, bit by bit. So the key to training categorical perception for a single-dimensional phenomenon, like brightness or like musical pitch, could be to present multiple different examples of each category, and to present them in a pattern that causes categorical perception to develop.

So what could that pattern be?

I suspected the pattern could be the bimodal distribution model. This hypothesis suggests a mechanism for how we learn phonemes categorically. Its writers suggest that, when we learn our native langauge, we hear hundreds of different people saying the "same" phonemes, yet none of them sound exactly the same. When your friend says "oo", it sounds differently from when your teacher says "oo"-- yet the two different "oo" sounds still fall in the same essential frequency range. As you hear thousands upon thousands of different examples, your mind averages them out, and forms a mathematical construct of each frequency-range category: the position of its ideal example (the "categorical center") as well as the points at which a phoneme stops being one sound and starts being another (the "categorical boundary"). And the pattern of these thousands of examples is, for each category, a normal distribution-- otherwise known as a "bell curve".

I began designing ETCv7's new game, "Absolute Pitch Painter", based on these two principles. Players would be presented with a normal distribution of different pitch sounds, and the action of the game would be to sort them into categories. Because I already had introduced the idea of eggs that played tones corresponding to colors (Absolute Pitch Avenue) it wasn't a far stretch to imagine that eggs playing tones could be dipped in different vats of dye according to their category. I'll spare you the details, but the game came together relatively quickly, and I began playing it, with one question burning in my mind: will this really work? Will this truly teach categorical perception of pitch, or will it be merely an interesting failure? I didn't want to release the game unless I was sure it would work. So I designed the game to measure and report whether or not it was in fact teaching me categorical perception.

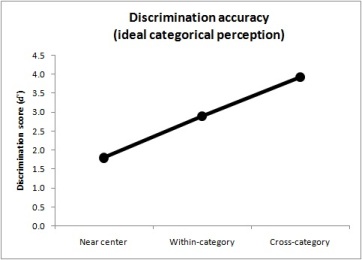

You can measure the effect of categorical perception, as it develops, because it changes how you perceive differences. Members of the same category will seem more similar to each other, and you become less able to tell them apart. Members of different categories will seem more different from each other, and you become more able to tell them apart. This means there are three ways to verify the presence of categorical perception: subjective impression, identification, and discrimination. I kept each of these criteria in mind as I began to play the game.

I was initially encouraged by my own subjective impressions. The game begins with only two categories, and of course I made a terrible muddle trying to figure out whether the tones in the middle of the range belonged to the higher or lower category. But gradually I began to notice that tones on this side tended to sound like this, and tones on that side tended to sound like that. I began to be able to make decisions because each tone began to sound more like it belonged either to this category or that category. Or, at least, so it seemed to me. I was getting better at making decisions, as evidenced by my increasing scores on the high-score table... but was this happening due to categorical perception, or was I just getting better at remembering which of the middle tones were which?

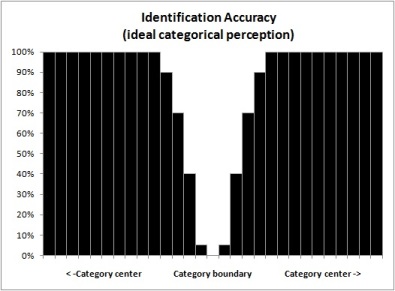

I was further encouraged by my identification scores, which are aggregated and computed after each game. When you perceive categories, you identify items as belonging to the same category until they move across the categorical boundary, and then snap! you suddenly start identifying the next category. I've mentioned before that the best example of this is when you say "oo" and let it slide into "ah"-- even though the sound is, clearly, constantly changing, you only hear two vowels, and you can tell that there's that sudden moment when one becomes the other. That "sudden moment" is a small, small area near the category boundary where you really can't tell whether it's one or the other, and that's the only place where you're really going to make identification mistakes. What this means is that, if you were to make a chart to demonstrate idealized categorical perception, you would see near-100% accuracy right up to the categorical border, and then a sudden sharp drop at the border, and an equally-sudden spike once you were across the border. Like this:

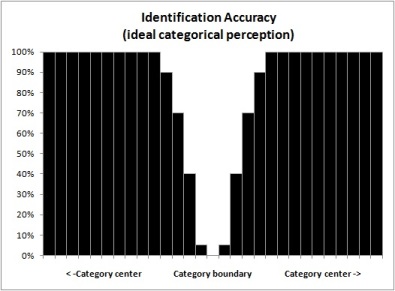

So you can imagine I was pretty jazzed when I charted my own identification accuracy, and it looked like this:

Even so, I still couldn't be any more certain that this represented categorical perception than I was certain about my subjective impressions. This result didn't necessarily mean that I had learned to recognize where one category started and another one ended. It could still mean that I had become reasonably skilled at identifying the obvious members of either category, and just got confused at the more-ambiguous middle points.

The final measure would be discrimination-- the "level up" portion of the game. Because of the way the game is designed, I would have to complete the entire session before I could know whether or not it had actually worked. But if it had worked, then I knew exactly what my results would look like. If I were perceiving these sounds categorically, then:

- Sounds at the category center would seem most alike, and I would have

the most trouble telling them apart.

- Sounds within the category, but further from the center, would be easier

to tell apart.

- Sounds that went across categories, being most different, would be easiest

to tell apart.

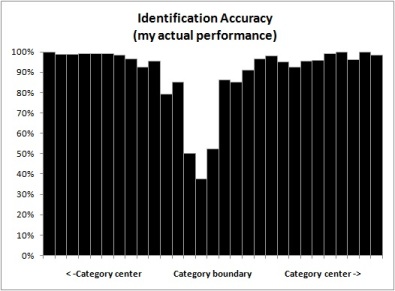

A chart of this result would, ideally, look like this:

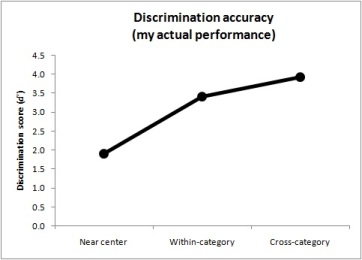

So I finished the level-up portion of the game. I exported my scores, compiled them, and imported them. Then I took a deep breath and clicked "Chart". And this is what I saw:

That deep breath came out as a deep sigh of relief. It had worked. I was perceiving these sounds categorically. I began plowing through the rest of the level-- and as I began the third level-up session, I considered what the new result should look like. If this program was indeed training me to hear categorically, and if I was getting better at it, then sounds near the category center should continue to become increasingly similar to each other, until I couldn't tell them apart at all. So when I finished the third session and charted the results, you can be sure I was delighted to see this:

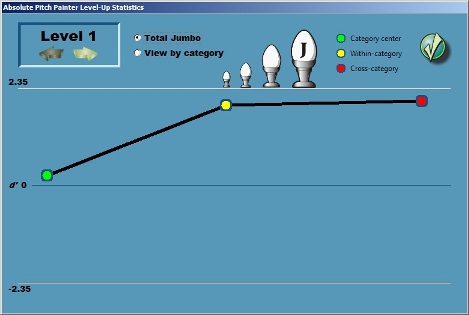

By the time I'd finished the fourth level-up session, I had also created a routine within ETC to automatically generate the charts (and make them look more fun).

So now we begin the next phase of the Ear Training Companion, with renewed optimism. Specifically:

- Absolute listening requires chroma perception; Absolute Pitch Avenue

teaches that.

- Absolute listening requires categorical perception; and now Absolute Pitch

Painter teaches that.

Even so, I do still think there will be more to the training than this. There has to be. Most obviously, Absolute Pitch Painter only works with the middle range of piano tones, and I need to discover how to make our minds accept higher and lower notes as belonging to these same seven (twelve) categories. I also can't be sure that my mind isn't somehow developing relative categories, rather than absolute categories, in the same way that Interval Loader trains one-note scale degrees. And, most importantly, even if these categories are developed, it will still become necessary to hear these sounds in music, or else the whole effort is nothing more than a party trick. It is entirely possible that I have already solved some of these issues with Absolute Pitch Avenue and Chordhopper-- that's why I designed them in the first place-- and maybe I will want to bring back Note Boat (with a better implementation than the buggy v6). But at the very, very least, Absolute Pitch Painter stands poised to help us understand for categorical perception what Absolute Pitch Avenue did for chroma: if APP itself isn't the final piece of the puzzle, then it will surely show us the shape of the next piece.

I've been playing ETC v7 and carefully assessing, subjectively and objectively, as best I can, whether it's actually teaching me categorical perception. And so far, to borrow a phrase from the Magic 8-Ball, signs point to yes.

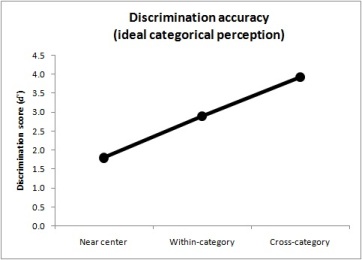

My accuracy for discrimination-- telling apart fine differences-- seems to be conforming to the ideal pattern. The ideal pattern for categorical discrimination is to do poorly near the categorical center, better when making within-category judgments, and best when making cross-category comparisons, which would make a chart look like this:

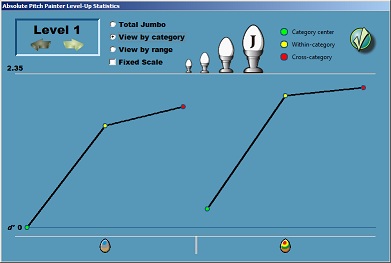

Well, here's what my chart looked like at the end of Level 1. Each category represents about half the middle octave.

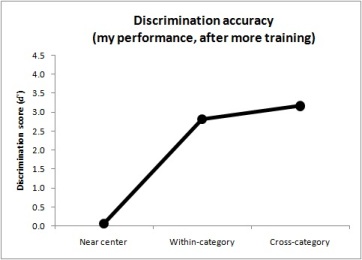

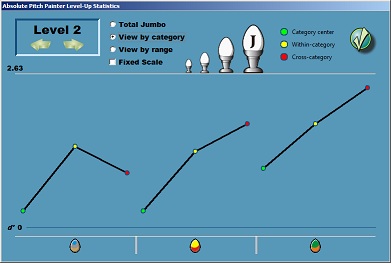

And here's my chart at the end of Level 2, where the top category has been split in half:

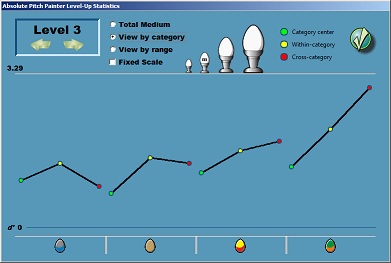

I'm not too terribly concerned about being somewhat off the ideal in the lowest category. I'm more interested in the results for the other two categories. You see, those upper two categories were an even split of the upper category from Level 1. This means that that border between the categories, now, where you can see in the chart I was best at making fine distinctions (both the red dots) was previously the center of the upper category. And previously, at the center of the upper category, I was the worst-- barely able to tell the sounds apart at all. (Note that the green dot on the right in the first chart is nearly zero.) And even though I'm only halfway through Level 3, my results are still encouraging:

These charts are just an indirect measure, though, of what I'm supposed to be learning. I'm supposed to be learning how to identify these sounds. The game is obviously teaching me this, to some extent, or I wouldn't be able to progress to new levels. My subjective experience, though, seems to support the objective measurements.

The only way I've found to really win the game is to never, ever guess. This is a practical necessity. Tones near a category boundary are naturally difficult to identify. This means that tones near the boundary will always, always trip you up, even if you've learned the categories perfectly. So when I hear a tone that I don't immediately recognize, I drop the egg at the visual boundary between categories on screen, but I don't actually color the egg. Then, as I continue to find eggs that are not difficult to identify, I keep coming back to the mystery egg. Eventually, I will discover that the mystery egg sounds more like this category than that category, and I will be able to make a positive identification without guessing. I strongly suspect that this process, although it may seem like "cheating", is an important part of the game, and is helping my mind both to pinpoint where the boundary is and to better recognize the qualities that define each category. It takes some discipline to make myself not guess-- the keyboard shortcuts make it too easy to respond quickly, so I make myself play with dragging-and-dropping-- but I always get higher scores when I do.

And the most encouraging aspect of the game is that I can play it without ever guessing. When I hear "good" members of any of the four categories, there's no ambiguity and no doubt in my mind. The tone sounds like whichever category it belongs to. I often do have to give myself a moment to recognize it, though, which is another reason I can't use the keyboard shortcuts. The keyboard shortcuts encourage me to play too quickly, and this causes me to make interesting mistakes. Sometimes I'll feel a pattern in the keystrokes, and I'll suddenly press the next key in that supposed pattern instead of the key for whatever tone I just heard. Other times, making one mistake sets forth a cascade of mistakes, because I start pressing the keys for the tones I previously heard instead of the tones I am currently hearing. Still other times, I find that having my fingers on the keys encourages me to reflexively press a different key for a "higher" or "lower" tone, even when I can tell that the tone is in the same category. Nonetheless, if I see during a game that there's no way I'll make the high score I'll need to advance, I will move from the mouse to the keyboard to see just how quickly I can go and still listen for chroma qualities instead of relative distances.

I still don't know if the sounds I'm learning are absolute or relative. I seem to be recognizing a unique quality of the sounds which could very well be chroma, because it appears to be independent of comparative "height". However, this quality could just as easily be a chroma-like attribute of C-major scale degrees. Maybe I'm even focusing in on qualities of piano sounds that won't be present when I hear the same pitches from other instruments. But I'm not yet worried about this, for two reasons. One is that I want to learn the scale degrees anyway. Another is that if this kind of categorical-perception training does work, as it seems to be doing, then I should be able to adjust the process, and go through it again, to learn what I didn't get this time around.

I've been playing Level 4 now for a while. Moving through the Small

and Medium games was unexpectedly easy. Only the two new categories

were featured (![]() and

and

![]() )

and it seemed blindingly obvious which category each tone belonged to.

I even got two perfect scores on the Small game without much effort.

This made it all the more perplexing when I started in on the Large game,

where the three remaining categories were re-introduced, and suddenly I no

longer had this same clarity. What's more, my perception of the lowest

category (

)

and it seemed blindingly obvious which category each tone belonged to.

I even got two perfect scores on the Small game without much effort.

This made it all the more perplexing when I started in on the Large game,

where the three remaining categories were re-introduced, and suddenly I no

longer had this same clarity. What's more, my perception of the lowest

category (![]() )

was still very weak. I decided to take a moment and figure out why I

was making these mistakes, because by this time I felt I should know better.

)

was still very weak. I decided to take a moment and figure out why I

was making these mistakes, because by this time I felt I should know better.

The answer was height. I had come to think of

![]() as "the lowest" category-- therefore, when I heard a tone that wasn't "the

lowest", I'd quickly assume that it must be the next-highest category (

as "the lowest" category-- therefore, when I heard a tone that wasn't "the

lowest", I'd quickly assume that it must be the next-highest category (![]() ),

and answer incorrectly. Likewise, on the opposite end, I thought of

),

and answer incorrectly. Likewise, on the opposite end, I thought of

![]() as "the highest" category, and when I heard a tone that wasn't "the

highest", I'd suppose it must be the next-lowest category (

as "the highest" category, and when I heard a tone that wasn't "the

highest", I'd suppose it must be the next-lowest category (![]() ),

and answer incorrectly. And then, thirdly, because I could hear a very

distinct "plunky" quality from the lower tones of the

),

and answer incorrectly. And then, thirdly, because I could hear a very

distinct "plunky" quality from the lower tones of the

![]() category, every time I heard a tone I could tell was in the upper half of

the overall range, but lower than the highest category

category, every time I heard a tone I could tell was in the upper half of

the overall range, but lower than the highest category

![]() ,

I would guess

,

I would guess

![]() unless I distinctly heard that "plunky" quality... and only after seeing

that I'd gotten it wrong did I listen more carefully and hear that same

"plunky" quality more subtly present. Once I paid attention, it was

evident that I was making these same mistakes constantly, and always because

of the height judgment.

unless I distinctly heard that "plunky" quality... and only after seeing

that I'd gotten it wrong did I listen more carefully and hear that same

"plunky" quality more subtly present. Once I paid attention, it was

evident that I was making these same mistakes constantly, and always because

of the height judgment.

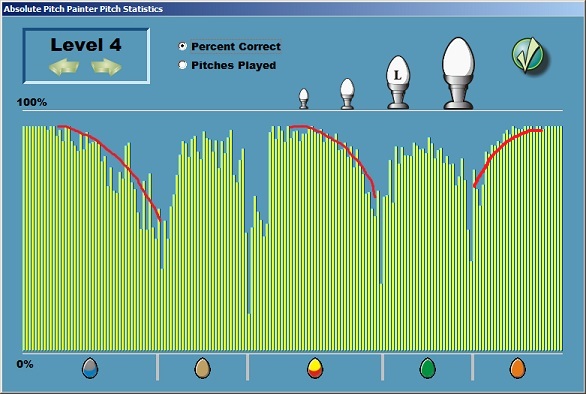

I brought up the pitch-stats screen and saw quite clearly what height errors look like. They curve down toward the category boundary, because as a pitch moves toward the border, the pitch increasingly seems "high enough" or "low enough" to be part of the next category. I've marked them in red, below, to make them even more obvious.

I noticed, of course, that the other boundaries had the sharp drop-off

indicative of categorical perception (that is, low

![]() ,

the division between

,

the division between

![]() and

and

![]() ,

and either side of

,

and either side of

![]() ).

And I quickly realized that my subjective experience of these borders was

different. I have a clear sense of what

).

And I quickly realized that my subjective experience of these borders was

different. I have a clear sense of what

![]() ,

,

![]() ,

and the lower part of

,

and the lower part of

![]() sound like. Each of these areas has a characteristic sound. As I

was playing the game, tones within these areas didn't really lose their

characteristic sound until they got to the border. Once they passed

the border, then they showed more of the other category's characteristic

sound. As "abminor"

described it

in the forum, "[my perception] tells me this is like a color blend

between the two categories but one color dominates, so I select the

appropriate category based off that." Where I knew the

characteristic sounds, I showed categorical judgment, and didn't make height

errors.

sound like. Each of these areas has a characteristic sound. As I

was playing the game, tones within these areas didn't really lose their

characteristic sound until they got to the border. Once they passed

the border, then they showed more of the other category's characteristic

sound. As "abminor"

described it

in the forum, "[my perception] tells me this is like a color blend

between the two categories but one color dominates, so I select the

appropriate category based off that." Where I knew the

characteristic sounds, I showed categorical judgment, and didn't make height

errors.

So I no longer have to wonder whether the categorical perception being taught here is based on height. It can't be, because now I see that you can't form categories if you make judgments based on height. In fact, it suddenly seems obvious why and how chroma and categorical perception work together in absolute pitch judgment, which in turn explains why I was so baffled about how to learn categorical perception along a single dimensional continuum: that is, you can't. The "height" of a tone doesn't change from end to end. You can tell that one tone is higher or lower than another, and you can estimate approximately where a tone sits along the entire continuum of frequency values, but because "height" is a quality that can be detected along the entire range of sound, there is no reason why your mind should mark a particular frequency x as the divisor between any one area and any other area. So your mind doesn't do that. And it won't do that, no matter how much effort you put into training. But once your mind detects some quality that actually does change-- fundamentally, not incrementally-- from one area to another, then it becomes possible for your mind to mark a division between those changes. Chroma is an obvious candidate for that quality. So are scale degrees, for that matter. But height isn't.

With that in mind, I've altered my gameplay strategy. Because I

know, now, that I have terrible trouble mistaking

![]() for

for

![]() ,

and the parts immediately above and below

,

and the parts immediately above and below

![]() for

for

![]() ,

I no longer make it my goal to place these eggs into their respective bins.

Rather, when I hear a tone that could belong to

,

I no longer make it my goal to place these eggs into their respective bins.

Rather, when I hear a tone that could belong to

![]() ,

,

![]() ,

or

,

or

![]() ,

I make a mental guess at what category it belongs to and then set

it aside. Gradually I build a big enough pile of eggs from a

particular area that I can start sifting through them and finding the

boundary, so that I can sort each of them into their respective bins without

making mistakes. Being able to do this, by the way, is why the game is

structured so you can randomly access the tones, instead of being presented

with them one-at-a-time quiz-style. You need to be able to make

comparisons, hear the similar qualities between the tones, and make

judgments based on their similarities. Once you have formed an idea in

your mind of what each category sounds like, you can compare the sounds you

hear to that image in your mind, but until that image is clearly and solidly

formed there's no other way to know except by comparing the sounds to each

other.

,

I make a mental guess at what category it belongs to and then set

it aside. Gradually I build a big enough pile of eggs from a

particular area that I can start sifting through them and finding the

boundary, so that I can sort each of them into their respective bins without

making mistakes. Being able to do this, by the way, is why the game is

structured so you can randomly access the tones, instead of being presented

with them one-at-a-time quiz-style. You need to be able to make

comparisons, hear the similar qualities between the tones, and make

judgments based on their similarities. Once you have formed an idea in

your mind of what each category sounds like, you can compare the sounds you

hear to that image in your mind, but until that image is clearly and solidly

formed there's no other way to know except by comparing the sounds to each

other.

In addition to all this, I had a sudden flash of insight about what it means to have "active" absolute pitch-- to be able to sing whatever pitch you want on demand. Having active absolute pitch means that you do not sing a pitch. You sing the quality that you associate with the pitch, and the correct pitch comes out automatically. Compare this to how you produce language sounds. You don't torture yourself trying to remember what the frequencies 240 Hz and 2400 Hz sound like together and then trying to make yourself reproduce those pitches. You just think "ee" and those frequencies are what come out. Likewise, I just now tested myself by singing the "plunky" quality I associate with the lower part of the red/yellow category. This happens to be middle C-- but I just sang it as a quality, without thinking of a melody, without thinking of a height, without even thinking of it as a pitch. Just remembered the quality, opened my mouth, and let it out. And wouldn't you know? It was a C. Not a perfectly-tuned C, no, because the category I'm learning is still rather broad-- but it was a middle C. This, in turn, answers the question I've been beating myself up about for years: when you're trying to identify or produce a pitch, how do you know that you've got it right? How can you confirm your pitch is correct, except by playing a "reference tone" and finding out? The answer is finally clear: because it matches the sound quality you've got in your mind. The sound quality is independent of height-- it's particular only to the pitch category that you're trying to identify (or produce). Therefore, once you have learned the sound quality of that category, then you will be able to identify (or produce) the tone, because no other category has that same quality. The critical element to success is whether the quality you've learned is in fact that essential quality of the category. If you have learned to estimate height, it will not work, because all pitch categories have height. If you have learned by trying to apply adjectives to pitches (mellow, twangy, etc) it won't work, because you become able to describe pitch qualities by being able to recognize pitches, and not the other way around. But once you can detect and distinctly recognize the sound quality that uniquely defines a pitch category... then, you can identify or produce a tone, and you'll know that you've done it right.