Acoustic Learning,

Inc.

Absolute Pitch research, ear training and more

While waiting for Hurricane Frances to do whatever it's going to do, I've updated Phase 11 with all the references from Mark Rush's 1989 dissertation, plus new references from more recent articles that I found through the University of Florida database system.

In describing the research problem, Rush's verbal tone is unsubtly condescending towards David Burge. Rush kicks off the piece by saying that Burge's handbook "...is written in a non-technical, non-scholarly style and, in fact, its very title contains the imprecise and confusing terms-- perfect pitch and color hearing." He refers to Burge's written work as "misleading", "incongruous", and "cryptic". And, after writing 118 pages detailing 30 different methods for teaching absolute pitch (dating back to 1899), Rush dryly presents the following:

Burge's imprecision may merely be the result of a lack of familiarity with the related literature. Regarding the body of knowledge concerning absolute pitch, Burge (1984b) writes, "very little had [sic] been written on the subject-- mostly just old research experiments in the 30s and 40s which don't really conclude much." Likewise, Burge's review of previous attempts to train absolute pitch includes the work of only two writers (Mull, 1925; Hindemith, 1946), and the entire handbook cites only 14 different sources. Indeed, the cover notes proclaim it to be "the only ear-training technique handbook for developing absolute pitch!"

In examining the list of research that I've assembled so far what quickly becomes obvious is that despite the great body of research that had been developed prior to Rush's report, there has been no methodological breakthrough in adult absolute pitch training since 1899. Even then, this was known to be true: if you practice memorizing tones, you will get better at naming and recalling tones. Cuddy demonstrated this conclusively in 1970 with her own method. Indeed, if you look at the different methods and similar levels of success across the methods, you could even conclude that the specific exercises in those methods don't matter; it's practice and diligence at tone memorization that does the trick, not the method itself.

Me, I continue to play Interval Loader, for relative pitch, and my high scores just keep getting higher and higher. It's bizarre. I remember Gibson's comment that there is no extinction in perceptual learning-- I believe I will have to look at Meyer's 1899 system, because (according to Rush) Meyer's ability to name notes faded through lack of continued practice. I suspect I will find that his system, like many of the other systems, involves a memorization task rather than a perceptual discrimination task, and thus will be likely to fade without continual reinforcement. By contrast, I find that even if I don't play Interval Loader for a while, each time I come back to it I'm just as competent as before.

Before now, I've been skeptical that absolute pitch was primarily a labeling task. I'm still skeptical, and now thanks to Interval Loader I have a better sense of why I would be. For the most part, playing the game has now become a simple matter of typing. I hear an interval, I press the corresponding button. I'm no longer "figuring out" any of the sounds. What ends my game, then, is when I hit the multiple timbres-- or when I get too many minor sixths. As long as the game doesn't throw too many minor sixths at me, I keep plugging along, but most of the time the m6 will get me. When I take a moment before pressing the key, I manage to recognize the m6 as "the unfamiliar one", but because I identify the other intervals so quickly, it's easy to slip into a pattern of immediate response; then, when an m6 appears, I instinctively pick up on some characteristic that is familiar enough to connect it with a different interval. Most of the time.

A couple days ago, though, the game played an interval that seemed unusual. I stopped and contemplated the sound. I wasn't sure whether I recognized it or not. I clicked the box to play the sound again... and then again... and then again. With each repetition, it became clearer that I didn't recognize the sound. But what made this so strange was that I wasn't actually identifying the sound as "the one I don't recognize". Instead, it was like there was literally a hole in my mind where something should be, something normally triggered by an interval sound. The emptiness was conspicuous because, whatever this something is, it is present for all the other intervals. I hear the interval, I make the connection to the something, and then I can press the key or speak the name. According to what I've been writing, I would term this something as the "musical idea" that is the interval; and it was unnerving to play this interval sound and feel, literally, an emptiness in my mind where any other interval would have found its place. I played the sound a few more times, and then I guessed (correctly) that it was a minor sixth. Weird.

What will it take to make this connection, and to give the minor sixth its own identity? Will I need to explicitly and consciously identify certain of its characteristics, or will my mind automatically begin to recognize its unique sound without my trying to find it? In any case, though, the empty space was not waiting for some kind of label. It was waiting to become an idea.

Thanks to Frances, the tree in my back yard now has considerably fewer branches attached, but that seems to be the worst of it (except for losing net access-- I went down for three days). Nonetheless, the severe weather has given me extra time for unscheduled activity, and I've taken some of that time to add more research summaries and references. In doing so, I find that some things are going to demand further comment than a short summary.

Tonight I was particularly interested in Russo, Windell, and Cuddy's 2003 study. The authors, noting that many people have theorized a "critical period" in childhood for learning absolute pitch, actually attempted to teach it to children. (Duh!) I was immediately intrigued by the abstract, which said that children aged 5-6 performed better than ages 3-4; this seemed to contradict Taneda's assertion that by the time a child is 5 years old it is probably too late. So I looked at the method-- and this hooked me further, because they used exactly the perceptual strategy that I intend to implement to teach adults in the pitch game for the Ear Training Companion (a strategy drawn from Gibson's theories and Fletcher's method). Specifically, these experimenters asked the subjects to identify a "special note" (middle C) among a set of choices.

Then I examined their results. The most potent summary is drawn in this quote:

The older children, and some of the younger children, adopted a perceptual strategy of moving the pitch boundaries for identification from the full range of tones to a narrow category around the single tone. In contrast, adults focused on a subset of tones within the set of alternatives.

I've been trying to figure out a way to explain this verbally, but I don't think anything demonstrates it better than the charted results.

You can clearly see the children's perceptual strategy; they all started with random distributions, and gradually pushed the boundaries inward towards C (this is most obvious in Week 3). The adults' categorical preference is equally clear; even in the very first week, they selected tones which sounded sort of like the target tone. This demands an important perspective for Week 6; although the adult and younger-children graphs look somewhat similar, the children are making a perceptual judgment and the adults a categorical one.

These results could support a number of different ideas, but these predominate my thinking:

There is an evident distinction between the learning strategies of children younger and older than 5 years. Therefore, it seems reasonable to expect that Taneda's method could work for 3-4 year olds and not for older children-- and it is equally reasonable to expect that the ETC/Fletcher method (or "special note" as they call it in this study) could work for older children but not for younger.

The adults, as I might have predicted, adopted a categorical rather than a perceptual strategy; that is, they looked for higher-order features rather than lower-order characteristics. They didn't evaluate spectral sound quality; they selected tones. However, it is undeniable that they did so successfully, and it seems possible that further training may confound the categorical preference and promote perceptual learning. (these results also explain why I keep making the same errors in Chordsweeper despite hours of practice.)

The older children, still relatively naive in perceptual analysis, responded to the perceptual learning task by seeking lower-order characteristics. This is not speculation, but a known developmental attribute of perception.

This experiment provides direct evidence that the ETC/Fletcher method will work for teaching absolute pitch to older children and adults.

In their abstract, the authors claim that this experiment "...provide[s] strong support for a critical period for absolute pitch acquisition." This got my dander up, and I was ready to argue against it-- but in the full text, the authors change their conclusion, saying instead that the data "provide the first unequivocal experimental support for a critical period in which tone-label acquisition is privileged." This statement I find harder to argue with, but there are still two items which should not be overlooked. First, that if they had used Taneda's method instead of Fletcher's, their results with the two child age groups would probably be reversed; their assessment of which years encompass the critical period would change, and the different results would call into question whether the critical period is ultimately a biological or a methodological issue. Second, children and adults employ different learning strategies (categorical vs perceptual)-- as such, this data doesn't really show that tone-label acquisition is privileged in children, but that their tone discrimination is more effectively accomplished. Tone labeling, according to my understanding, is made possible by accurate discrimination, but it is neither a necessary nor an inevitable consequence.

While waiting for the next hurricane, I'm making progress on various fronts. Even though I have been able to read and summarize only a few new articles, I have been gathering many articles through the library database system. I'll keep picking away at this list until I've reached back as far as the electronic systems can manage, and probably on some holiday (come December, most like) I'll attack the library's paper journal holdings with my laptop and portable scanner.

I have been surprised to learn that absolute pitch is not only associated with a superenlarged left planum temporale, but also with a larger than normal right planum parietale. When I find time, I need to discover what action this part of the brain is normally associated with.

I'm also intrigued by the description of sign language as "spatial" language, and I wonder if similarities will exist between it and musical "space".

Today, though, I was thinking mainly about Morton's 2001 study of children's perception of emotion in speech. What it seems to describe is that a newborn's perception of language sound begins as completely musical-- or, rather, no distinction exists between "language" and "music", and as a child approaches 5 years old they become increasingly aware of the difference. Then, having reached 5 years old, a child's comprehension of language utterly excludes the musical component (which is why, for example, children have trouble understanding irony or sarcasm), and this persists until about 10 years of age. After this time, the literal content of linguistic messages fades in importance until, as adults, our reception of language is drawn primarily from "paralinguistic cues".

This is an appealing model for explaining the child's acquisition of language or the adult's failure to learn. Between ages 5 and 10, a child is listening only to the sounds of language; after age 10, literal sound becomes decreasingly important, until in adulthood it is the least part of the communication stream.

I'm forced to wonder, though, if there is a comparable model for musical development. On the one hand, there might be a period in which a musically-trained child listens to music without any regard for linguistic content-- this would suggest a reason why I always have such difficulty listening to lyrics in songs. On the other hand, this model could be seen as compelling support for unlearning theory. If, in countries with non-tonal languages, it is the child's natural tendency to learn its native tongue by subsuming absolute sensation in favor of relative perception (such as contour and harmony) then might not the same be expected in the child's acquisition of musical language?

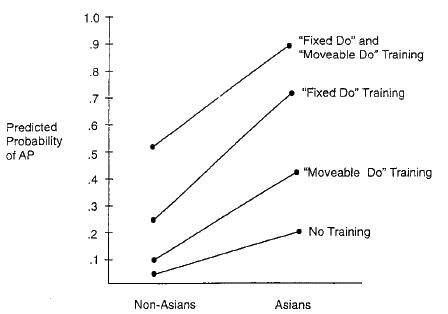

Among the various articles which I have newly found, there is a chart which did catch my eye. Recognizing that "early musical training" was not enough to guarantee a child's acquisition of absolute pitch, and responding to the observation that there are many different types of musical training, Gregersen et al (2001) conducted a survey and achieved the following results:

I remember that the Yamaha method boasted an (approximately) 85% rate of absolute pitch acquisition in Japan; if I'm reading this chart correctly, then that must be the topmost dot on the right. When I begin looking at musical methods for children-- Kodaly, Suzuki, Yamaha, and possibly Dalcroze will be my primary targets, although I'll need to compile a fairly complete list-- I'll have to put them into these categories.

This certainly seems in keeping with the Morton model. In moveable-do only, or with no training at all, the absolute musical sounds are ignored and lost. But if fixed sounds are made a meaningful part of the language, and the student is further trained to recognize, infer, and manipulate the contexts of those individual sounds through moveable-do orientation, then we're on the opposite pole from those Portuguese illiterates. Under such training conditions, the most likely explanation that a student wouldn't develop absolute pitch-- aside from the apparent fact that the curricula are designed to teach fixed do rather than absolute pitch-- would seem to be a lack of neural (genetic) capability.

UPDATE (9/16): Gregersen and his collaborators (who created the chart I've inserted above) separated Asian and non-Asian to imply that-- because Asians are not always exposed to musical training as children but still have higher proportions of absolute pitch-- Asians probably have a genetic predisposition towards absolute pitch. But I've just been reminded of a point I made some while ago: Asians speak Asian languages. Asian vowels are specific; Asian speaking is tonally precise. Western speech is saturated with diphthongs and slides. Why hypothesize a mysterious genetic component when the language itself has such an evident effect (as Diana Deutsch has demonstrated)?

Copyright law durations have changed over the years. Especially with Disney's recent move it seems obvious that the changes have occurred as "estates" (that administered and enforced the copyrights) saw the inevitable disappearance of their golden geese and used their corporate lobbying power to keep money in their own hands. As I understand it, the simplest rationale behind copyright expiration-- just as with patent expiration-- is that the creator deserves to be rewarded for their efforts, but it's important for society-at-large to be able to benefit overall. In any case, all works published prior to 1928 are currently in the public domain, and resultantly I will be sure to post any early articles for you on this site.

In this case, I've got hold of Meyer's 1899 publication which, it seems, everyone continues to make reference to, even in articles from this year-- and, because of its celebrity, I admit I expected more. It's merely a two-page brief which can be summarized as follows: "We tried learning musical sounds, and we succeeded at above-chance levels; but it was such hard work that we gave up, and now we've forgotten everything we learned." Still, this is not an insignificant statement, because their experiment demonstrated that the ability to recognize pitches was not a physiological mutation. I suspect it continues to be referenced for largely the same reasons I suggested on September 5, and also to illustrate the historical perspective of how, over 100 years later, their basic attempt is still relevant.

I should have just gone to sleep when I had the chance-- but no, I thought I'd do a quick summary of one of these articles, and now I'm too intrigued to leave it alone. The article is Heaton's 2003 study, "Pitch memory, labelling and disembedding in autism." (the link is to her full abstract.) Heaton did a series of three experiments using autistic children between 7-15 years of age.

In the first experiment, she wanted to make sure that the children could recognize tones. She trained the children to associate four different tones with four different animal pictures. The autistic children did become able to identify the tones using the pictures-- and were better at it than non-autistic children. This is not too surprising; other papers have shown that autistic children are predisposed to absolute perception.

In the second experiment, Heaton played three-tone chords and found that the autistic children were able to recognize which of the four tones was missing-- again, at better rates of success than the non-autistic children.

In the third experiment, Heaton changed the setting completely. Using twenty-four different major and minor triads, she played a chord followed by a comparison tone. The autistic children were no better at this task than the non-autistic children. Even when the actual tones of the chord were played individually prior to sounding the chord (which was again followed by the test tone), the autistic children showed no advantage.

I agree with Heaton's basic conclusion-- that autistic children process pitch sensations differently (I believe this is because their minds seek low-level characteristics rather than high-level features)-- but I disagree with Heaton's assessment of the results of Experiment 3. She writes how the results show that the autistic children, "..like controls, succumbed to the Gestalt qualities of the chords." However, without "pre-exposure" to pitch sound, pitch sound remains undifferentiated sensation. Like the Portuguese illiterates, if you don't know that the complex sound has components, your mind will not find any components, regardless of your autistic tendencies. The children didn't "succumb" to anything; the pitch sounds never existed, so how could they be disembedded?

In any case, though, whatever I might think of Heaton's methods or conclusions, the important thing is that her experiment provides further direct support for Taneda's method. Like Taneda, Heaton used graphemes (the animal pictures) to "fix" the identity of phonemes (the pitch sounds)-- and her data shows that it worked.

Before the weekend I picked up Jane Siegel's 1971 dissertation, The Nature of Absolute Pitch, which had arrived through interlibrary loan. It's not particularly revealing of anything. She presents four hypotheses of why absolute pitch occurs; she dismisses three of the hypotheses based on existing research, and tests the fourth with an experiment of her own. The experiment fails to support the fourth hypothesis, but she goes down fighting, insisting that this fourth hypothesis is still the "most plausible".

I found something else interesting in the paper, though. Siegel mentions how Miller's Magical Number Seven, Plus or Minus Two explains how that ability to categorize a spectrum is generally limited to seven choices (such as color's ROYGBIV). If this is so, wonders Siegel, how can people with absolute pitch identify 48 tones or more? My answer, when I read this, immediately popped into mind. The Secret of Lesson One explains the illusion of octaves and implies that the listener is actually identifying a maximum of only twelve tones. Furthermore, Miller, in that same Magical Number Seven article, shows how the addition of a second dimensional attribute increases memory capacity. If "pitch" and "octave" are considered to be separate characteristics, then Miller has already explained how it's possible to recognize and identify dozens of different tone sounds from a base of only twelve pitches.

However, I considered, twelve is still more than seven-- it's even more than seven plus two. Perhaps there was something strange going on..? Then I remembered that there's other research which agrees that absolute listeners have greater difficulty identifying the piano's "black notes" than they do the "white notes" (such as Miyazaki's 1988 study, which produced the graph shown here, showing slower and less accurate responses for all non-white notes).

In the publications in which this effect has been observed, the authors have usually speculated that the reason for this discrepancy is tonal; the black notes are more poorly known because they are outside of the "familiar" C-major scale. Now that I look at it, it seems most likely that the poorly-known black notes are due to Miller's magical number seven, dividing the spectrum into seven scalar notes, with the others known less confidently, but no less clearly, as variants of the other seven. (This seems to make sense, intuitively; I have absolute color, myself, and if I see something that is "maroon" I'll first recognize it as "red" before making a further distinction.) This could be tested by training some child to categorize the scale according to seven tones other than the C-major scale-- but I doubt that test will ever occur.

In other news, I'm starting to get some information that, if I'm understanding it correctly, implies that the whole genetics vs training argument is largely based on an ethnically chauvinist attitude. Almost none of the many, many articles that I've been looking at make any reference to Japanese publications, research, or pedagogy. Yet Japanese musical training has resulted in a high incidence of absolute pitch in its students (as high as 85%) and in the general population. Miyazaki's experiments are aimed at existing ability in adults, rather than its development in children, so I can understand its omission-- but the overall lack of attention to Japanese tradition seems almost like willful ignorance.

When I'm writing on this site, I try to remind myself to be wary of when I'm just engaging in logical speculation versus when I'm reporting scientific fact. Although I have confidence in my powers of synthesis, it's important to remember that logical "proof" is only definitive in mathematics (if even then). Just because something sounds reasonable based on the available information, that doesn't mean it's true. So, naturally, I get more than a little excited when I discover that scientists have not only been drawing the same logical conclusions as I have, but they've conducted legitimate experiments to test those conclusions.

In this case I found an article that relates to my June 26 entry. I had suggested then that tonal languages are those which have a linguistic component "one step higher" than the phoneme-- one represented by a single pitch sound rather than a combination-- and now I've got research that supports the idea. In 1998, Jack Gandour and his group conducted brain-scan studies of Thai and English speakers, because Thai is a tonal language and English is not. For each group, they played language with tonal qualities, language without tonal qualities, and tones without language qualities. What they found was this: Thai speakers processed pitch sound in "Broca's area" (an area known to be a language center) when it was linguistically relevant. When the pitch sounds were not in a linguistic context, Thai listeners did not activate Broca's area. The English speakers, unsurprisingly, showed no linguistic activation for pitch sounds. The authors offered this perspective for their results:

This cross-linguistic comparison provides support for the view that complex auditory processing leading to speech perception undergoes discrete processing stages, each involving separate cortical areas of a distributed neural network. Whether linguistic or nonlinguistic, perceptual analysis of auditory stimuli occurs in the temporal lobe. However, when a phonological decision is to be made, subjects must access articulatory representations involving neural circuits that include Broca's area.

This pokes open a question which I had thought closed, with a slight semantic change: do people with absolute pitch hear musical pitches phonologically? I wrote to Daniel Levitin back in February, and he told me that absolute listeners process phonemes and pitches in different areas of the brain, and I thought that finished the issue. But Gandour's experiment shows the tone-language speakers do process both phoneme and pitch in Broca's area-- when the pitch sounds are linguistic. Could a change of conditions have produced a different result in Levitin's absolute listeners? Or is there a different kind of "phonological" processing for musical language than literal language? Scientists have generally assumed that the left planum temporale activation is involved in tone labeling, but what if it actually is processing the "phonological" pitch?

Although that question will undoubtedly remain open for a while, the other matter seems to be confirmed: tone languages do appear to have a "phoneme" that is a single pitch sound.

Although these days I only drive to and from rehearsals (and the grocery store), I've started using the Relative Pitch Monster Course in the car. I hadn't expected to use the Monstercourse myself, because I play Interval Loader at home all the time-- but because I play on my laptop, rather than on my Macintosh, I don't play the singing games. And, now that I'm rehearsing for Anything Goes, I'm learning how necessary the singing games are.

I had a heck of a time singing my solo. Initially I couldn't sing the first note. Thanks to the training I have done, I could hear every time that I was singing the fifth-- but I wanted to produce the octave. Later, when I transferred the music from the page to the computer, I was surprised to see that the first three notes weren't do re do, as I'd been singing, but do re ti. And I couldn't get that ti. If I just sang carelessly, I'd always produce do re do, but if I remembered that the third note was "further down", I'd inevitably sing do re la. I soon recognized my problem: the target note was doubly dissonant. It was a major seventh scale degree, down a minor third down from the previous note. My voice wanted to be consonant, so it would produce either the tonic or a major third down.

Because I haven't trained myself to sing specific intervals (either an M7 scale degree or a descending m3), I tried something I could do. After singing the second note, I imagined an m2 that ended on do and sang its first note. This worked, but I was never sure whether I had the right note unless I actually sang the do. I gave up, and the next day I spent a little time with the music director, who helped me imagine the octave sound in the context of the song's intro.

This reminded me of Miyazaki's experiments, and his observations of absolute pitch as an "inability". In music, just as with any other activity, you apply the skills you have, even if those skills are not the most efficient or the most effective for accomplishing the intended task. In this case, I successfully found a way to produce ti, but it was both inefficient and ineffective. I needed better training-- a different kind of training-- to produce the correct note. By parallel, it seems clear that people with absolute pitch aren't hindered by their ability unless they fail to develop the relative skills which are necessary for good musicianship.

I just took a look at Keenan et al's 2001 study "Absolute Pitch and Planum Temporale". I'd read plenty of times that absolute pitch listeners have an enlarged left planum temporale, and I wasn't expecting much-- so I was surprised to see the authors put a new spin on the topic. According to Keenan and his group, the left side isn't enlarged. The right side is shrunken.

Their subjects were all adults, so it's not possible to tell how the asymmetries originally developed-- but regardless of its origin, shifting responsibility for absolute pitch from big-left to small-right creates a new perspective, summarized thusly by the authors:

...early developmental pruning in the right PT may create an anatomical dominance of the left PT. This in turn might create a functional dominance of the left PT over the right PT, which might be necessary for the acquisition and/or manifestation of absolute pitch. This anatomical difference is likely due to factors other than early musical training or music exposure and it might indicate that young children with an increased leftward PT asymmetry might develop AP if they have an early music exposure.

In other words, a child's right PT may be too small to process musical sounds normally. Overstressed, it recruits the left side, which interprets the musical sound in its own way-- absolutely. This hypothesis could explain a few things:

- why absolute pitch can develop with no musical training

- why early exposure to ordinary musical training can induce absolute pitch

- why musicians with absolute pitch can have difficulty with relative tasks

- why early musical training of fixed and movable do is more likely to induce absolute pitch

Although this does raise new doubts about absolute pitch as an inability-- perhaps absolute listeners really can have trouble learning relative perception-- it's this last item that I'm particularly interested in. I had wondered why relative training could induce absolute pitch. It seemed reasonable that fixed-do and movable-do combined would create better musicians, but Gregersen's chart (which I reproduced in the September 11 entry) indicates the likelihood of absolute pitch can be more than double that of fixed-do training alone. Why would this occur, especially if the musical training system is not designed to teach absolute pitch?

This new perspective suggests an answer. At times when the training required a student to use movable-do and fixed-do skills simultaneously, the right side would naturally recruit the left for the extra processing power, and absolute pitch would develop. However, if a child's right side is adequately large to handle the load, the left side would not be activated-- and that student would be part of the 15% who doesn't acquire absolute pitch.

I am assuming that the right planum temporale is primarily responsible for processing musical sounds. It does seem implicit from Keenan's paper, and a quick Google search seems to support the assumption. Overlooked in this study, though, is the potential influence of the planum parietale, whose right side is stronger in absolute listeners. I don't immediately see what the planum parietale is for, but now I have a couple new studies that I'm looking forward to reading.

I've been reading and re-reading Itoh, Miyazaki, and Nakada's 2003 study about ear advantage. I keep looking back at it because I can't decide whether it's only supporting what I've already said or opening up entirely new avenues of thought. Let me give you an excerpt from the abstract. "Dichotically" in this case means "one tone in each ear".

Two piano tones paired at various pitch intervals... were presented one note in each ear to twenty absolute-pitch possessors. As a result, a weak overall trend for left ear advantage (LEA) was found, as is characteristic of trained musicians. Second, pitches of dissonant intervals were more difficult to identify than those of consonant intervals. Finally, the LEA was greater with dissonant intervals than with consonant intervals. As the tones were dichotically presented, the results indicated that the central auditory system could distinguish between consonant and dissonant intervals without initial processing of pitch-pitch relations in the cochlea.

Their first experiment was to test for an ear advantage in recognizing and naming single tones. They predicted that a right ear advantage would be evident, as would have I-- they thought it would be because the subjects respond verbally, and I'd expect it if the pitches are being processed phonemically instead of musically, in either case showing left-hemisphere advantage-- but they found no advantage for either ear.

Their second experiment tested for ear advantage in recognizing and naming tones when presented to different ears simultaneously. They found that, the more dissonant the interval, the greater the left ear's advantage-- and that the more dissonant the interval, the harder it was to identify the pitches.

I keep looking at these facts and wondering what they imply. Why is there no right-ear advantage in naming single tones? Why is there a left ear advantage for naming simultaneous tones? Why does the left-ear advantage increase with increasing dissonance?

It would seem apparent is that, even for an absolute listener who claims not to hear relatively, even when the naming task has nothing to do with the sounds' relationships, absolute information is inferred from relative sound. This is indeed parallel to the linguistic data which indicates that we don't hear phonemes but only infer them-- but then, if the tones are being extracted from structural analysis, shouldn't that produce a right-ear advantage?

At this point, the strongest conclusion which I think could be drawn from this is that absolute listeners perceive relative sounds even when they think they don't. If they didn't, then tonal relationships would surely have no effect on their ability to recognize pitches. Perhaps more important, though, is the possibility that absolute listeners hear the relative sound first. I've previously suggested a model in which pitch information arrives "raw" at the tonotopic map and then is assembled into relative sounds based on the listener's experience and expectations. Now I'm intrigued to speculate that absolute listening occurs only after the relative assembly and not before-- this would support my assertion that training an adult to hear in absolute pitch shouldn't attempt to intercept pitch data before it becomes relative, but rather should create new methods of decoding relative information.

This data answers questions I haven't yet thought to ask, and it raises questions I'm not able to answer. It's possible, too, that the separate pitch sounds are interfering with each other rather than being inferred from the relative sound, even though this is occurring neurally instead of physically. I'm keeping an eye on this one.

I've found and posted both Whipple's 1903 article Studies in Pitch Discrimination and Boggs' 1907 followup Studies in Absolute Pitch. I didn't retype them, but scanned them; as I'm rushing between class and rehearsal I haven't had time to meticulously edit them, so if you spot any typos please let me know.

What I find most astonishing about this pair of articles is, despite being published a century ago, both of them are making observations that are essentially the same as those being made today. Neurologically, linguistically, we're well ahead from where we were, but these psychological observations are just as fresh now as they were then. By comparison, a different article in the same volume of The American Journal of Psychiatry was dedicated to a very serious discussion of whether there were actually such things as primary colors. It took me a while to realize that this was the topic-- the term "primary colors" was not used, and obviously color science has advanced considerably since then. Not so pitch science.

It's a lot to read, so I'll refrain from comment for the moment-- but perhaps they stand well enough in the context of what I've already written.

When I'm looking for the articles I've sourced, I also scan the journals' tables of contents to see if there are any other relevant papers, and there are usually a few things that are worth looking over. What I have just found today, I'm thrilled to report, is something I had wanted but didn't think existed-- a thorough case study of someone with color anomia. Despite the havoc it's going to wreak on my sleep schedule, I've scanned and tidied up the text so that you can read it right away.

I'd say that it seems to give an idea of what it could be like if you had absolute pitch and were trying to understand the perception of someone who didn't. There certainly seem to be obvious parallels. The description of "brightness" (or intensity) seems similar to the squares at the top of the secret of lesson one, and the subject's misperception of visual contour makes me wonder if there is a similar confusion that occurs between pitch perception and musical contour.

I've scanned and checked and posted Helen Mull's 1925 study, The Acquisition of Absolute Pitch. I was again amazed that, like the other articles, its contents don't seem to be dated-- and not because any of these scientists were particularly foresighted, but because, it seems, absolute pitch research has done very little but spin its wheels for the last century. This 1925 study is essentially a more thorough version of Rush's 1989 dissertation. If you don't feel like reading all the words and numbers, skip ahead to the summary of results and conclusions and you'll shake your head too.

The more I look through these older articles, the more I see the lack of progress. For another example, Jeffress' 1962 publication (nearly 40 years after Mull's) points out that absolute pitch is probably not genetic because musical training tends to run in the family-- which is exactly what Levitin said in 1999 and Baharloo said in 2000 (grudgingly, as a result of the data he'd collected).

I should qualify: research on absolute pitch acquisition and training has made very little progress. Our understanding of pitch perception, neural processing, and aural cognition in general has increased tremendously. Studies like Shepard's endlessly-ascending tones (1964), Zatorre's brain scanning (in the last decade), Trehub and Trainor's attention to infant perception (since the 80s), and many others have created a full and vivid picture of what's going on in our minds when it comes to perceiving and processing pitch sounds. But the same debates and the same questions are being asked and answered repeatedly about "where does absolute pitch come from, and how do you get it?" That's what we need a definitive, scientifically demonstrated answer for. I'm not going to stop until we have that answer.

I referenced the Shepard tones in my last entry; today I've read the actual article, and I understand more clearly its relevance to absolute pitch research.

Bachem, as early as 1937, argued strongly that absolute pitch judgments were based on tone chroma and not tone height. He further insisted that these two characteristics were separate and distinct qualities of a musical tone. This type of thinking led to the helical model of pitch, which presented the musical scale as a circle of twelve tones which spiraled upward in height (I've described this same model by analogy, using color brightness, in the Secret of Lesson One). Noting this, Shepard made the following logical deduction:

The fact that one of the two components of pitch is circular in the helical model raises the remarkable possibility that, by appropriately exaggerating that component ([that is], tonality), one might be able to bring about a breakdown of transitivity in judgments of relative pitch. In the extreme case, if the dimension of height could be suppressed altogether, all tones an octave apart would be mapped into the same tone; that is, the tonal helix would be collapsed into a tonal circle. Judgments of relative pitch should then become completely circular in the sense that there would be no highest or lowest tone in the set but only an isotropic ring in which every tone has both a clockwise neighbor that is judged higher in pitch and a counterclockwise neighbor that is judged lower in pitch.

This is, of course, exactly what he accomplished. But the question immediately arises: why should there be a circle at all? If judgments of tone chroma are absolute, then why should they have any relationship to each other-- even a circular one? It's not an illusion of relative pitch; Shepard included an absolute listener among his subjects, and that person had the same experience as the normal (relative) listeners.

The answer comes partly from Korpell's study of the following year (1965). Korpell wanted to know-- what is tone chroma? He manipulated overtones and presented the resulting sounds to absolute listeners. He determined that the subjects' evaluation of "chroma" was based on the fundamental frequency of the tone, not the overtone structure of the complex sound.

Korpell's work further informs Shepard's own explanation of the effect. Shepard believed that the illusion of ascension was due to the tones' proximity. His tones had no discernable height, but they were a single semitone apart. He offers the following analogy to explain why this then forces a high/low choice:

Consider, in particular, a long row of identical light bulbs in which, say, every tenth bulb is illuminated. Imagine, now, that all of these lights are simultaneously turned off and the adjacent lights to the right of these are simultaneously turned on. The invariable result, of course, is that the pattern of illuminated spots is seen to shift, as a whole, one position to the right. Note, however, that the physical change would be equally consistent with a shift of nine positions to the left. But this is never perceived.

If all the lights were to shift by five, of course, the resulting movement would be completely ambiguous; the observer would make an unconscious decision about the direction of movement which might not agree with another observer's choice. This was later demonstrated aurally by Diana Deutsch's tritone paradox.

These demonstrations make it possible to conclude that tone chroma is a single characteristic of a tonal sound, and that the "chroma" is perceived as a function of the tone's spectral frequency. It is not the same as the frequency itself-- because perceived pitch can be affected by various factors-- but it arises from that frequency. One spectral frequency is different from another because the cycles-per-second (Hz) which defines it is either faster or slower, and this allows a listener to perceive each tone as "higher" or "lower"; however, tone chroma recognition is a categorical judgment, not a relative one.

A couple new old articles now posted: The effect of training in pitch discrimination (1914) and Effects of practice in the discrimination and singing of tones (1917).

As reading material, they're more than a little dry; I'm sure you don't need to examine them too closely. Their historical significance, I think, is that (at this time) it seemed possible that absolute pitch was due to a physiological difference in listeners' ears-- one which allowed them to make finer-than-normal discriminations between pitches. The 1914 article claimed that there are indeed physiological limits; that is, tonal discrimination can be improved with practice, but only to a specific maximal ability determined by the listener's aural mechanism. The 1917 article suggested no such limit, but noted instead that discrimination of specific tones improved when a subject was trained to sing them.

It seems intuitively possible that tonal discrimination could be due to physiological limits; I speculated about that myself on June 22 of last year. On the other hand, with a non-absolute listener, it seems possible that the undifferentiated sensation that is pitch sound could perhaps be more difficult to detect than the precise categorical spectrum of absolute hearing-- not because of a physical difference in the ear's ability, but because the absolute listener would know how to mentally fix pitches' identities and, therefore, be more able to detect a noticeable difference.

In either case, it has since been determined that "tone chroma", rather than a fine-distinction ability, is what makes absolute judgments possible. I think what I'll find in these articles is that a subject becomes more able to recognize tonal qualities through identification of the tones-- in this case, by singing, and in other cases by graphemic association as well.

Another new old article: Memory for Absolute Pitch (1917). This article states unequivocally that tone chroma is the root of absolute pitch ability, although Baird doesn't use the term "tone chroma". Baird uses the similar term "clang-tint" to refer to timbre instead, and for chroma quality he says "c-ness" or "d-ness".

Baird is skeptical of Meyer's 1899 study; this is ironic, because in 1956 Meyer responded (to Bachem's 1955 paper) saying that the idea of "tone chroma" was nonsense. I can understand how the two articles I previously posted, being Baird's contemporaries, would still need to examine the question of discrimination versus chroma, but it's strange how, 39 years later, Meyer was still stuck on the 1917 issues.

Still another new old article: A Study of Tonal Attributes (1919). The author played tones for six different observers and asked the observers to evaluate the tones based on characteristics like "brightness" or "volume" or other subjective attributes.

In addition to these subjective qualities, the author also asked the subjects to listen for vowel sounds in the tones. Vowel listening, in practice nearly 80 years ago! And, as I discovered myself, the subjects reported that the vowel sounds have to be "thought into" the tones.

Three more articles posted: The inheritance of musical talent (1920), The method of absolute judgment in psychophysics (1928), and A new classification of tonal qualities (1929). My initial impression of them is that the 1920 article may be a pro-genetic counterpoint to Copp's perspective, the 1929 one seems to be an early description of the now-familiar pitch helix (his rationale for its tapering bell shape is interesting), and the 1928 study appears to be an answer to the question "how do people make absolute judgments, anyway?"

I'll probably comment further on that 1928 paper in a little while; today I realized that, after my recent binge of acquiring articles, I now have more than 200 articles to look at and evaluate and (probably) summarize. Should be interesting...

Today I remembered to add to the library page four Japanese-language books on absolute pitch. I don't know anything about the Japanese language, unfortunately, but if you do then you might find these books handy. And maybe then you'd write and tell me something about them.

I've also summarized more articles on the research page. Some trends are evident:

Since 1899, there have been repeated attempts to teach adults to recognize tones, and every one of the attempts has been successful. However, in every case, there have been detractors (most vocally, Bachem) who claim that tone recognition is not actually absolute pitch.

"Tone chroma" was explicitly recognized in 1925 as the basis of absolute pitch judgment. In the following decades, although others (notably Neu) speculated that the ability was, instead, a physiological discrimination or a memory for tones (a la Meyer), tone chroma has won out.

Since the 1900s, the genetic argument, the response to it, and the subsequent rebuttal has been exactly the same: (a) the ability aggregates in families, therefore it's genetic; but (b) because a musical family is more musically oriented, the ability is more likely to be learned, except that (c) not everyone in a musical family will learn it, therefore it is genetic and not learned. Seashore and Copp debated it in the 1910s, the argument continued throughout the century, and Brown and Levitin perpetuate the exact same logic in 2003.

Pitch class (recognized by tone chroma) is best understood as a flat circle. A second quality ("height", "brightness", or "density", depending on whom you ask) is responsible for its relative position; otherwise, "higher" and "lower" relationships depend entirely on which direction you travel around the circle to reach the second tone.

Since at least 1934, if not earlier, and as recently as April of this year, the same tired explanations of absolute pitch have been consistently offered to the public in non-scientific articles:

- people who have it can name tones

- perfect pitch is not "perfect"

- absolute pitch makes transposition difficult

- absolute listeners "go crazy" when something is out of tune

- absolute pitch is musically irrelevant.

And, consistently, with one partial effort (Russo et al 2003) and one paper I can't locate (Oura & Eguchi, 1982), every experimenter has attempted to teach absolute pitch to adults. Never to children.

I finally have specific information about Eguchi's method, quoted in Miyazaki's 1990 publication to explain why his subjects were better at naming white keys than black:

One factor that makes it increasingly difficult to acquire absolute pitch after about 6 years of age is assumed to be the development of relative pitch, which presumably acts against the acquisition of absolute pitch. Some evidence supporting this view was reported by Oura and Eguchi (1982). They trained children to acquire absolute pitch under a specially designed training program for that purpose. Of the two cases they reported, the training proved successful for one child who had begun the training at 4 years and 4 months of age. On the other hand, the training was quite unsuccessful for the other child who had begun the training at 5 years and 6 months of age and had already developed a tendency to listen on the basis of relative pitch. In this training program for absolute pitch, children began with listening to the major triads in the C-major scale and tried to memorize the triads as such. Single tones were never presented, because listening to the single tones was found not to be helpful to the development of absolute pitch. When the children learned to differentiate the triads, they could identify each single pitch too even though they had never been trained with single tones. At first, components of the C-major triad, C, E, and G, were established, and then other diatonic tones in the C-major mode followed. After absolute pitch for all seven pitches in the C-major mode (i.e., the white-key notes) were established, chords comprising notes with an accidental were introduced and then, those pitches (i.e. the black-key notes) were added to their absolute-pitch repertoire. The sequence toward establishing absolute pitch in their training program seems to be responsible for the observed difference in accessibility between the note categories.

I can see how this could work. For one, of course, each chord has a root sensation; it's like teaching a child the letter "d" from "dog". For another, as Chordsweeper has demonstrated, guided listening to chords can naturally and automatically cause the mind to extract the chords' component pitches. However, Oura and Eguchi's report was twenty years ago, and many things can change. I wrote to the Eguchi school last month and received this reply:

We use colored flags to let them identify the chords, then move on to the next step which is to identify single tone[s]. When they identify the notes, we let them use Italian syllables.

Eguchi's training method must have evolved since 1982-- at least, some value in single tones has come to be appreciated. I wonder about the use of Italian syllables (solfege); although clearly Eguchi finds them effective for her purposes, I wonder if there is any interference effect between the sound of the syllable and the sound of the pitch, or if perhaps this type of training leads to the fixed-do version of absolute pitch which Miyazaki has been consistently and persistently describing as a musical inability. I don't yet have indication of how Eguchi integrates her absolute pitch training with full musicianship.

The scientific community generally seems to have agreed that pitch can be defined by two separate attributes: tone chroma and tone height. Shepard-tone demonstrations have repeatedly demonstrated that the pitch circle (of tone chroma) is fundamentally "flat"; absolute listeners confirm that they make separate judgments of pitch class and octave designation; Warren et al (2003) even showed that the two characteristics are clearly separated in brain activity. Although some researchers have argued that pitch has additional structural attributes, and others have argued that height, being separable from chroma, is technically not an attribute of pitch but of tone, it's not these split hairs that bother me. I'm concerned about the word height.

In the first part of the 20th century, there was still some mystery about the attributes of tones. Observers seemed perfectly content to use any of the terms "height," "density," "brightness," and "sharpness," (among others) interchangeably. Picking up on this, S.S. Stevens conducted a series of studies in 1934 to determine if indeed tones were spatial-- that is, for example, were "high" and "low" pitches actually perceived to be high and low in space? His conclusion: No. Tones are not spatial.

What this necessarily implies, then, is that tone height-- or high and low pitches-- is an entirely arbitrary designation which exists and persists only through convention. The descriptive terms we use, but for whatever quirk of fate, could easily have been light and dark, tight and loose, pointed and dull, or even small and large, as any of these pairs of terms are adequate to describe the same psychological quality of a tone now called "height." And yet, none of these terms are accurate. All of them are analogical and arbitrary.

And this is why I find myself wondering why this usage came to be-- because there is a term which is at once semantically appropriate, psychologically adequate, and physically precise: tone speed. "High" tones are, literally, faster than "low" tones. 880 cycles per second is twice as fast as 440 cycles per second. [I've previously suggested that pitch should be defined as "the instantaneous velocity of an object," and you can test this yourself. Tap your desk and listen to the pitch it makes; now strike again at a faster speed (but with the same amount of force) and you'll hear a "higher" pitch.] Anyone could easily recognize that a "higher" pitch sounds like something that's moving faster, and a "lower" pitch sounds slower. Why use arbitrary terms when literal ones work just as well-- or better? Indeed, I am beginning to believe that there may be useful advantages to thinking of tone speed.

For one, any gaps between rhythm and tone are immediately bridged. You are undoubtedly aware that a pitch represents a certain "frequency", or that the frequency is measured in "cycles per second"-- but have you ever asked yourself, cycles of what? Frequency of what? Until recently, I hadn't. The answer seems to be simple enough: frequency of rhythmic pulse. The pulse is nothing more than a burst of energy. I read a publication that mentioned a threshold at which our mind stops hearing rhythm and starts hearing pitch, so out of curiosity I created a sound file of sine wave pulses on both sides of that threshold: one second each of 10Hz, 20Hz, 30Hz, and 40Hz. You may need to crank up your computer's volume to hear it, but once you do, you'll hear that the sound resembles a motor starting (putt-putt-putt-puttputtputtptptptptprrrrrrrrrrrrrr), and you can clearly hear the transformation from pulse to pitch. [Because I'm a bass, I also recorded myself singing a very low tone, zoomed in on the waveform, and visually counted the 54 pulses per second; then I cut and pasted the same pulses at different horizontal distances from each other and found that they produced different pitches.]

Once you acknowledge that pitch sensation is essentially hyper-rhythm, that acknowledgement immediately demands a holistic perception of musical arrangement: rhythms within rhythms, structure within structure. A melody is ultimately not separated into "tone" and "meter" but is instead a unified, multilayered mesh of rhythmic patterns at different levels of magnitude.

This superstructure concept is supported physiologically by experiments conducted over the past few years mainly by Timothy Griffiths, Roy Patterson, and their various collaborators. They start from the deceptively simple observation that complex sounds which have no discernable spectral peak (such as hiss) will, nonetheless, produce a perceptually distinct pitch. Their opening salvo appears in the first words of Griffith et al's 1998 paper:

For over a century, models of pitch perception have been based on the frequency composition of the sound. Pitch phenomena can also be explained, however, in terms of the time structure, or temporal regularity, of sounds.

Their brain-scan studies continue to develop and build on this perspective. With their data, they argue that musical listening begins with a time analysis which proceeds to pitch perception, as opposed to a spectral activation which begins as pitch perception. The auditory image, they claim, is formed as a temporal structure, and pitch information is calculated from that image (in a right-brain area called Heschl's gyrus). Further analysis of the image-- such as melody or harmony-- occurs subsequently in other parts of the brain. They hypothesize that the basis of pitch calculation, or of any melodic analysis, is the unified temporal auditory image.

I was tempted to use this as an excuse to revisit and expand my hypotheses of musical sound as movement-- octave equivalence explained as constant acceleration, melodic contour described as the movement of an implicit object, et cetera-- except that those must yet be further informed by another crazy thing that Griffiths and Patterson have managed with tone height manipulation. I don't think I can explain it better than they did, so here are some text and sound excerpts from their 2003 paper, "Separating pitch chroma and pitch height in the human brain," in which they manipulated the spectral envelopes of tones.

Demonstration of Continuous Variation of Pitch Height That Can Be Achieved Within a Single Octave

When there is a clear difference in pitch height between two notes with the same chroma, one is sometimes inclined to interpret the difference as an octave jump. The two demonstrations are entirely based on these large pitch height changes, which all sound like they might be octave jumps, but which cannot be because the entire demo takes place within one octave!

The simplest demo is File 4, which has eight notes in pairs. Six of the notes have the odd harmonics attenuated by 6 dB... two of the notes are different (numbers 3 and 7) with attenuations of 0 and 15 dB, respectively. The pitch height seems to jump down an octave on the third note and up an octave on the seventh, but all of the notes are from within one octave.

The second demonstration, File 5, is a sequence of six pairs of notes. The chroma is fixed at 80 Hz, and the height is changed by attenuating the odd harmonics X dB, where X for the six pairs is 9-9, 0-9, 3-12, 6-15, 9-18, and 9-9. The first and last pairs are standards for this demo. In the four middle pairs, the height goes alternately up within pairs and down between pairs and progresses up across the octave over the four pairs without leaving the octave and without ever presenting a true octave. At the start, between the second and third notes, the pitch height seems to drop down an octave, and at the end between the third- and second-to-last notes, the pitch height seems to drop down an octave, but the second and second-to-last notes are the same!

These demonstrations suggest that we have learned to interpret pitch height changes as octave changes because of the constraints of traditional instruments, but in point of fact, they can be much smaller steps. The demonstrations suggest there is a continuous route from a note to its octave along a line with fixed chroma, and thus there is a complete and continuous dimension of pitch height.

I wonder if we're heading towards a destruction of the traditional concepts of scale and octave. Shepard's tones (1964) established the notion of a flat "pitch circle", and many experiments conducted since then have reproduced its result-- however, it has been universally assumed that a difference in height naturally causes a difference in chroma, leading to helical models of pitch that spiral around to octave equivalence. If chroma is no longer a fixed function of height, then the helix must unravel; the pitch circle will no longer be the base of a geometric model, but will instead fulfill a function similar to the color wheel, in which each color category is recognized to be an independent quality that can be manipulated along multiple continuous dimensions. Musical "space" (conceived of as distance between tones) will collapse; harmony will expand almost infinitely; and pitch relationships will be understood directly, independent of tonal context.

Addendum: According to Petran's 1932 overview of absolute pitch, it was Révész who, in 1913, came up with the idea of separating tone chroma (Qualität) and tone height (Höhe). Amusingly, Petran seems to mention Révész's dual-quality theory only for the sake of bashing it with the contrary opinions of twelve other researchers.

Something new: the Fletcher Music Method teacher's handbook. I hadn't released the book because, the first time I read it, it seemed to require both Fletcher's personal lectures and custom-designed toys-- both of which are long gone. However, I've had so many requests for this book that I took another look at it; and, to my surprise, it stands quite well on its own. The book offers good advice for teachers at any level, and it features literally dozens of ear-training and music theory games that any primary music teacher can incorporate into their classes.

I'd thought that the toys would be an obstacle, but once I began listing them I realized that any of them could be created by a teacher with basic crafts materials and a few hours to kill. Although it'd certainly be easier to buy the toys prefabricated (especially the break-apart keyboard), it's still possible for a dedicated and inventive teacher to play any of the games in the book. I wrote a preface in the book that describes all the different toys in detail.

And, despite the occasional appearance of the phrase "illustration in lecture"-- which indicates that Fletcher had more to say to those who came to her in person-- everything seems to be adequately explained in the text itself. The only thing which isn't directly explained is the pegboard she uses for teaching modulation; but she does tell you everything that you're supposed to be able to do with the board, and she includes a photograph of the board being used, and I've included as an appendix the original patent application which describes the mechanics of its operation.

So here we have the Fletcher Music Method teacher's handbook. I've posted some samples if you want to read a little bit of it. I only included two of the games in the sample because I figure that the philosophical stuff is more interesting to the casual reader. The book is aimed squarely at teachers who are teaching music to groups of 6- to 12-year-olds.

If you have previously purchased What is the Fletcher Music Method? and want to buy the handbook, please use this link for a $3 discount off the $15 price.

In other news, I've finally made it to Interval Loader's "One Note Advanced." I'm again amazed by the difference between this skill and "Two Note Advanced"; with the two-note game I have become swift and accurate with any of the 11 intervals, but as soon as I began the one-note game I was back to mixing up octaves and tritones. Nonetheless, just as when I first began Two Note Advanced, my high scores have already begun to creep upward and I'm already at Level 5. This time I'm going to keep more regular benchmarks so I can illustrate my progress more precisely.

The strangest part of playing One Note Advanced-- identifying the scale degree in movable-do-- is when the key signature changes but the target tone remains the same. I instantly and clearly recognize that I'm hearing the same note that was just played a few moments prior, and yet there's something entirely different that accompanies the tone, something I can't consciously define, something that even seems outside the tone, which I detect to correctly identify the tone.

The skill progression of each game seems to be on target, too; One Note Advanced is the most musical yet. With Two Note Advanced, I was able to transcribe an audition piece from an old recording by rapidly repeating pairs of tones until I recognized their relationship. With One Note Advanced I'm already starting to identify the occasional scale degree in real time.

Partly because of this, but mostly because of what I was writing about in the last entry, I've been thinking again about the difference between "distance" listening and harmonic listening. The more I consider it, the more unimportant "distance" listening seems. Here at the school, the musical-theater singing instructor is a solfege listener, and her explanation of its value is pointed and potent: "No matter what note you hear, it can only be one of eleven sounds." When you combine this simplicity with the apparent fact that "distance" is not actually a measure between fundamental frequencies, but is instead an illusion created by overtone structure... then "distance" judgment starts to look pretty unreliable.

In my final round-up of the literature on absolute pitch I've been reminded that nothing is necessarily what I think it is. Just in regard to planum temporale asymmetry, for example, I've found various papers which assert with reasonable support that:

- the left planum temporale does not interpret language, it just processes sound information

- sometimes the right planum temporale handles language and the left handles music

- asymmetry is physiological, not functional, and its size is thus neither a cause nor an effect of its use

- symmetry results from enhancement of the smaller side, not from inhibition of the (otherwise) larger side.

Melody and harmony may not be what we've assumed. In 1940, Heinz Werner trained his subjects in a scale of microtonal steps (where each step was .12 of a semitone) and discovered that not only did they adjust to the new scale, they adjusted completely-- hearing octave equivalencies, major and minor sounds, and standard harmonic relationships within and among the microsteps. I probably can't explain it better than the author; perhaps you should read about it yourself.

In conjunction with this, as has been illustrated, tone "height" may be an illusion created by overtone harmonics. A couple of days ago I realized that this might be why people with absolute pitch have a tendency to make octave errors. The sound files I posted show how, if a change in timbre causes a single-semitone difference in "height", but no change in chroma, a listener will naturally infer that the height change is a full octave-- an octave error in this case wouldn't represent a lack of sensitivity (implied by the listener's "inability" to judge the octave correctly) but an oversensitivity (since the listener is comparing the new "height" to a precise memory trace for that octave).

Furthermore, the particular height (or distance) between tones is an entirely arbitrary measure, not just in terminology but in perception. Any interval can seem to be any width you want it to be. Ron Gorow suggests, in his book, that you can take advantage of this by imagining small intervals to be great distances; a subject in Werner's study described how the micro-intervals suddenly seemed huge, like puppets becoming life-sized. But intervals are not one-dimensional; they have more salient characteristics than just "distance". I've discussed this to some extent already in Phase 7, and I've now encountered an article (Balzano, 1982) which shows scientifically that the perception of harmonic and melodic intervals is affected both by the tone chroma and scale-degree characteristics of the component pitches. [Perhaps these effects are the "missing dimension" described by Werner's subjects.]

So, in essence, left may be right, function may follow form, intervals could be pitches, and octaves might be semitones... among other contradictions. The net result, though, is the same as it has been-- as I proceed, I acknowledge that I'm describing what's probably true, and any of my premises could be yanked out from under me at any given moment. As Einstein said, "No amount of experimentation can ever prove me right; a single experiment can prove me wrong." We proceed with the best available truth.

For the past few days I've been working non-stop on the replacement for Chordsweeper: Chordfall.

Click either image for a larger version.

I'm too tired to write much about it right now, except to say that each of those little animals represents a different type of chord-- each category of animal (dog, cat, bovine, pachyderm, etc) is the chord type, and the animal's color (yellow, blue, brown, red) is the inversion of that chord.

If you bought the Ear Training Companion at any point in the past please write to me for your free upgrade (yes, all your Interval Loader scores and settings are preserved), and let me know which operating system you use.

Demo version now available-- with direct download this time. If my server gets overwhelmed I'll have to go back to the e-mail thing, but for now:

Download demo 3.3 for Mac OS Classic

It seems that, this semester, I'm directing seven plays and performing in two in addition to my regular classwork. Monday's breather has afforded me the time to finish formatting Otto Abraham's Das absolute Tonbewußtsein (1901) which should please those of you who understand German. It's too much for me to translate now.

If anyone wants to send me translations of any of the text (or point out German typos) please feel free.

I'm dialect-coaching my current show, and from this I'm learning that there are different ways to listen to accents. I'm still trying to figure out just how it works, but it seems to be that the two British listeners in the cast perceive an entire word and recognize when the structure is askew, while I am sensitive to the phonemic substitutions and can recognize when a sound has been replaced, even when the word is not obviously "wrong" as a result. For example, one of the American actors said the word "over" and pronounced the final R, which both the British speakers (and I) noticed. But once he dropped the R, I noticed that he had replaced it with a neutral vowel-- "uh" instead of "ah"-- and the two Brits didn't notice. This seems similar to absolute vs relative listening.

I've long since rejected the definition of absolute pitch as "the ability to name tones." However, it's only in the past couple of weeks that I had given much thought to an alternative definition-- and, in thinking about it (and discussing it with Peter), I decided that absolute pitch couldn't and shouldn't be defined so simply, in any case. I have been considering that a definition of "absolute pitch" must undoubtedly be as broadly applicable as the definition of phonemic awareness... and, in fact, I just this moment looked up a definition of phonemic awareness which essentially says it:

Phonemic awareness is the ability to notice, think about, and work with the individual sounds in spoken words.

This lends perspective to the difficulties that my castmates have with their accents, and why they have to tackle each substitution as a separate task. The two romantic leads are both from Georgia, and thus use an "ih" sound instead of an "eh" sound-- words like "pen", "any", and "intention" become "pin", "iny", and "intintion". Even though they notice it after they've spoken, they find it very difficult to make the switch. The difficulty appears to be that they perceive these sound clusters as unitary. They can't just swap out phonemes on the fly, but have to redefine their concept of the whole word before they can produce the new sound consistently.

In this view I could argue that I have "absolute phoneme" while they have "relative phoneme"-- to them, the use and manipulation of an individual phoneme is only meaningful (or even possible) when it is contextualized into some idea-structure, while I can freely swap them around. And yet, my own ability to use the "absolute phoneme" skill is heavily reliant on structural comprehension. When I heard the word "over" mispronounced-- a dropped R, but with a neutral vowel-- my mind quickly recognized the incorrect structure and segmented out the phoneme. I didn't just hear that neutral vowel; getting to it was a process. A lightning-fast process, but still a process, all the same; if I were not familiar with the word "over" or with the British dialect, I would not have been able to identify the neutral nor suggest its replacement.

So you can change some of the words of that phonemic-awareness definition and you've got my definition of absolute pitch:

Absolute pitch is the ability to notice, think about, and work with the individual pitches in musical structures.

I had this in mind when I read this part of the foreword to Structural Hearing by Felix Salzer:

To the gifted and experienced musician, music is a language-- to be understood in sentences, paragraphs and chapters. The student who is still struggling with letters and words, so to speak, needs the guidance that will reveal to him the larger meanings of the musical language. ...To tell the truth, musical theory as it is generally taught consists of a more or less elaborate system by which small musical units may be identified (or written) according to their position and function with respect to the temporary tonal context. The larger units are merely labeled according to the recognizable thematic characteristics. The student who masters such a system has indeed learned something about music; but what he has learned is a nomenclature by which he can conduct a well-described "tour" through a composition, pointing out each landmark and its more obvious characteristics. If music were only such a "conducted tour" it would never have the profound and moving effect upon us which has made it perhaps the greatest of all the arts.

Miyazaki has gone to considerable lengths to demonstrate that absolute pitch is a non-musical ability-- even an unmusical ability-- but if Salzer's foreword and my own experience with language is any indication, it wouldn't appear to be "musical" no matter what test you put it to. It could only be a supplement and a complement to structural perception and production skills, and not a replacement for those skills.

This, I believe, has implications not merely for absolute pitch training but for ear training in general, because it again emphasizes the importance of the musical idea. I've mentioned before one curious effect of my relationship to English language-- that I can pick up any piece of text and immediately begin reading it with all the natural involvement and inflection of a rehearsed performance. But this happens because I am completely ignoring the words! In just one glance, my mind "grabs" the idea represented by the textual phrases, and the words spill out naturally in service of that idea. Most people, when reading aloud, will be very aware of every word sound they create-- but I literally don't know what words I'm speaking, even as I say them. My mind is consciously occupied with the larger structure, but because of my knowledge of individual phonemes and language blends, those sounds are activated subconsciously for maximal effect.

That is, the point of learning chords, intervals, and pitch sounds seems to be to develop a facility with them to the point of using them automatically in service of the musical idea you wish to express. The more sophisticated your understanding of these sounds, the more able you will be to manipulate them to achieve a precise communication of your idea. I immediately wonder if this implies that traditional ear training is backwards-- perhaps the smaller structures should be trained as a consequence of the larger ones-- but there is a different question which has been lingering in my mind.

What is a musical idea?

One of the things lost to the hard drive crash is the mass of articles I'd scanned and gathered. I planned to comment on a couple of them, and I still may in the future once I've collected them all again, but for now I'll have to settle for the philosophical reflection rather than the hard science.

Immediately, though, here is another new old article: Evelyn Gough's 1922 publication about the effects of practice on judgments of absolute pitch. Again, I find that this decades-old research is just as timely as anything done in the past few years. Although the redundancy of these "ancient" works is becoming increasingly irritating, it nonetheless continues to underscore the lack of progress that has been made.

The hard drive crash really knocked the wind out of my sails-- but with my latest show now closed and Spring Break upon us, it's time to roll up the ol' sleeves and get back to it.

In the interim I haven't been idle; aside from everyday observations, I've been continuing to play Chordfall and Interval Loader. Since playing these two games is the only relative-pitch ear training I've ever done, I was pleased when I went to see Two Pianos, Four Hands earlier in the month and was able to identify the intervals and chords before the actors named them (except for that darned minor sixth). I'm glad that I waited until I'd completed the Chordfall benchmark routine before starting to play the game, because I have an accurate "before" and "after."

|

|

|